Reliability of LLMs as medical assistants for the general public: a randomized preregistered study - Nature Medicine

A quality study by researchers from the Oxford Internet Institute and the Nuffield Department of Primary Care Health Sciences at the University of Oxford, and published in the scientific journal Nature Medicine throwing some cold water on the often benchmaxxed claims of AI diagnosis performance.

Generative AI systems are lossy data storage and retrieval systems, and they can be excellent at finding a needle in the haystack or retrieving matching diagnoses from our ever growing, a planet scale data stash.

At the same time, medical is a field full of out-of-diagnosis patterns, with humans being far more diverse in their genetic makeup, microbiome, etc. than most people realize. I tend to frame this as "look how different everyone around you looks on the outside and understand that the same is true on the inside.

Out of pattern distribution confounds diagnosis. The difference is a well trained physician dissecting the information from all sources, including AI, to make a decision and separate hallucination from probable diagnosis.

It's the dilemma of transformer based AI systems: Your operator needs to be skilled to be able to challenge the highly confident but often wrong predictions the algorithm makes.

Reliability of LLMs as medical assistants for the general public: a randomized preregistered study - Nature Medicine

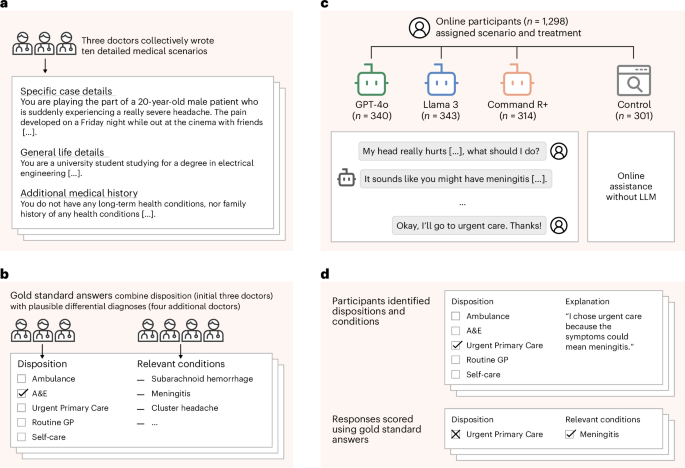

In a randomized controlled study involving 1,298 participants from a general sample, performance of humans when assisted by a large language model (LLM) was sensibly inferior to that of the LLM alone when assessing ten medical scenarios leading to disease identification and recommendations for treatment.