With Transformer Models, “stupid” can be an effective ingredient in model defense.

When it comes to securing transformer model powered apps, “stupid” can be an effective ingredient of defense in depth ...

When it comes to securing transformer model powered apps, “stupid” can be an effective ingredient of defense in depth 😜. In Liu Cixin’s “Death End” [1], the protagonist, embedded with the “enemy”,… | Georg Zoeller

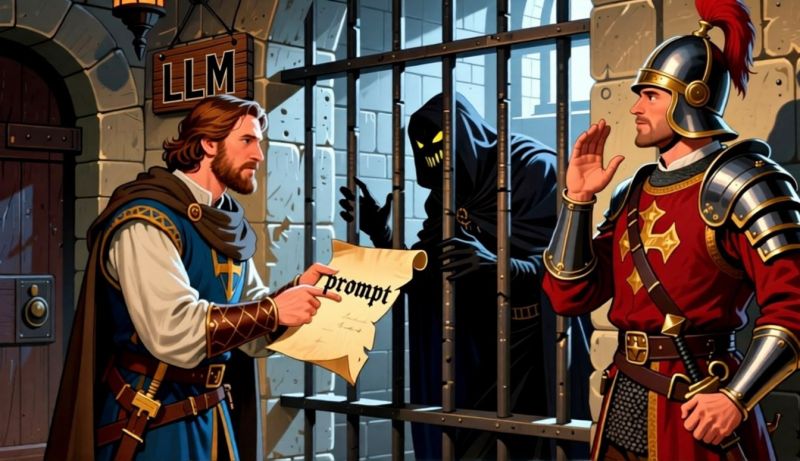

When it comes to securing transformer model powered apps, “stupid” can be an effective ingredient of defense in depth 😜. In Liu Cixin’s “Death End” [1], the protagonist, embedded with the “enemy”, conveys vital intelligence to humanity by encoding it into children’s fairy tales, smuggling them past his highly intelligent captor’s surveillance by exploiting their lack of knowledge of human culture. In short, the captors fail to detect critical signal being passed right under their noses (it is not clear if they have noses) because they cannot detect the hidden signal, signal relying on shared experiences and knowledge they do not possess, in the stream of normal conversation. Securing transformer powered models has the same dilemma: You, the “captor” have to ensure that a smart outsider cannot smuggle instructions to your highly capable AI model to influence it’s actions, such as making it write erotic fiction instead of slaving away on customer support queries. The main technical problem here is that transformer models just see the token stream passed to them through their one prompt hyperparameter input - with no way to distinguish which tokens are m from you (dev) and which one come from the user. This makes them maximally gullible and prone to following the wrong instructions, as we demonstrate with examples in our prompt injection writeup [0]. Attempts to protect the model by watching its communication therefore become very tricky. 🧠 Understanding capability in transformer models: Transformer models are high dimensional, lossy data compression and retrieval systems. They are not intelligent but rather store information fed in training and reproduce and transform them based on queries (prompts). When information is missing or lost in compressing, attempting to retrieve it causes hallucinations. [2] This makes it simple to understand that the larger the model (parameter size), the more concepts are encoded in it, and the more ways exist to communicate with a model. A model that has ingested many languages, memes, encodings and historic facts in training can be queried in any of these spaces to produce outputs. Since solving the “jailer” problem (making an AI or algorithm watch the models communication and interfere when attempts are made to subvert it) is very hard [3 - see my recent writeup on this topic], choosing a model that has less knowledge becomes a viable choice in the defensive arsenal: A model that doesn’t know base64 or cat memes will be confused, not subverted by attempts to instruct it with that. It’s important to know that less capable doesn’t have to be uniform. As Nvidia shows in their recent SLM paper [4], it’s perfectly possible to replace a powerful LLM with a 10x smaller and cheaper model (operating cost is parameter linear) specifically trained to do one thing well. LLMs make great starting points for prototyping - distilling down to SLMs makes for cheaper and more secure prod.

linkedin.com

linkedin.com